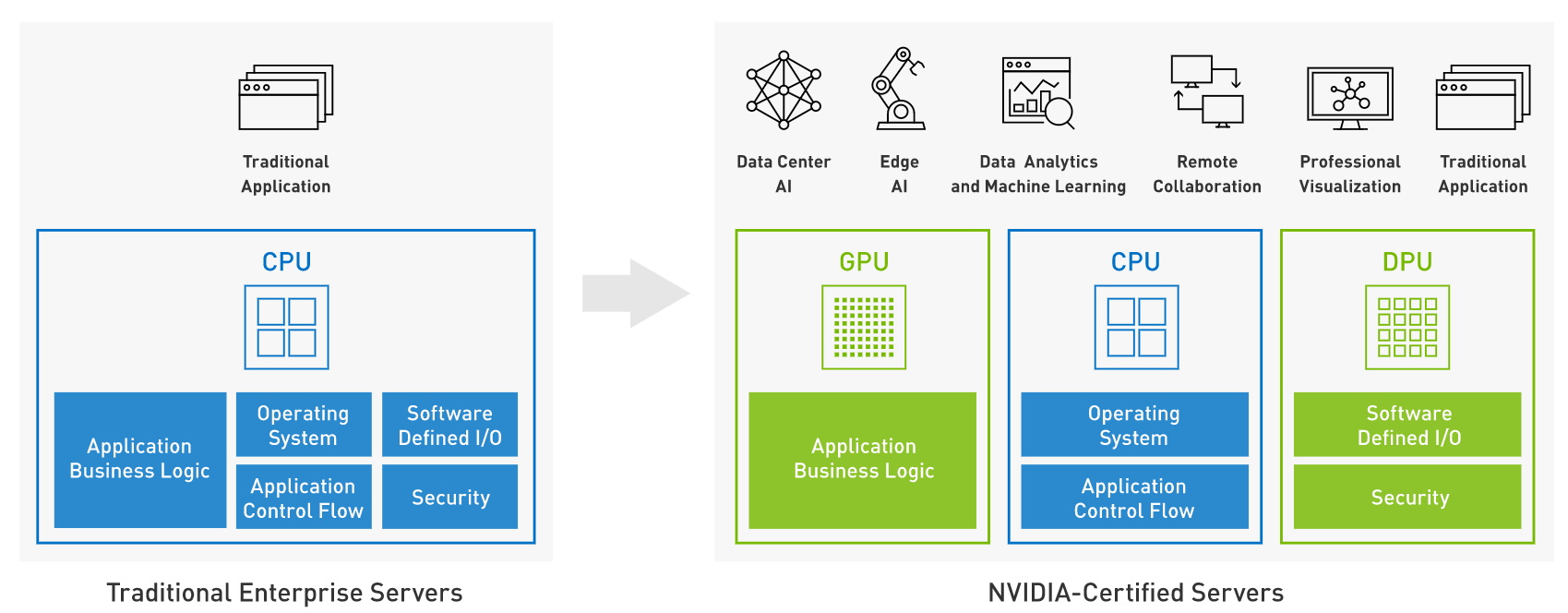

NVIDIA announces the latest development for its accelerated computing initiatives - HardwareZone.com.sg

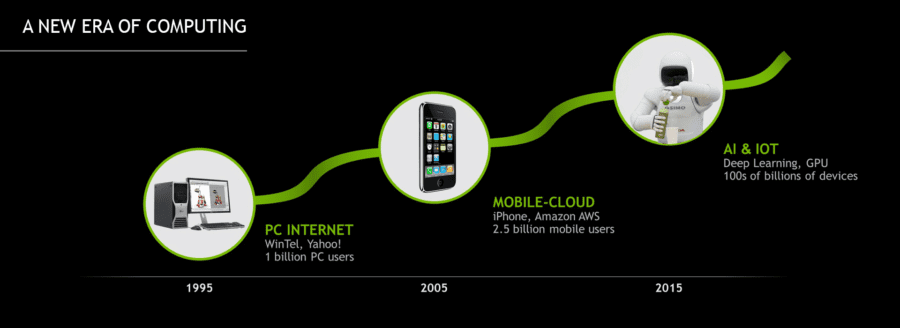

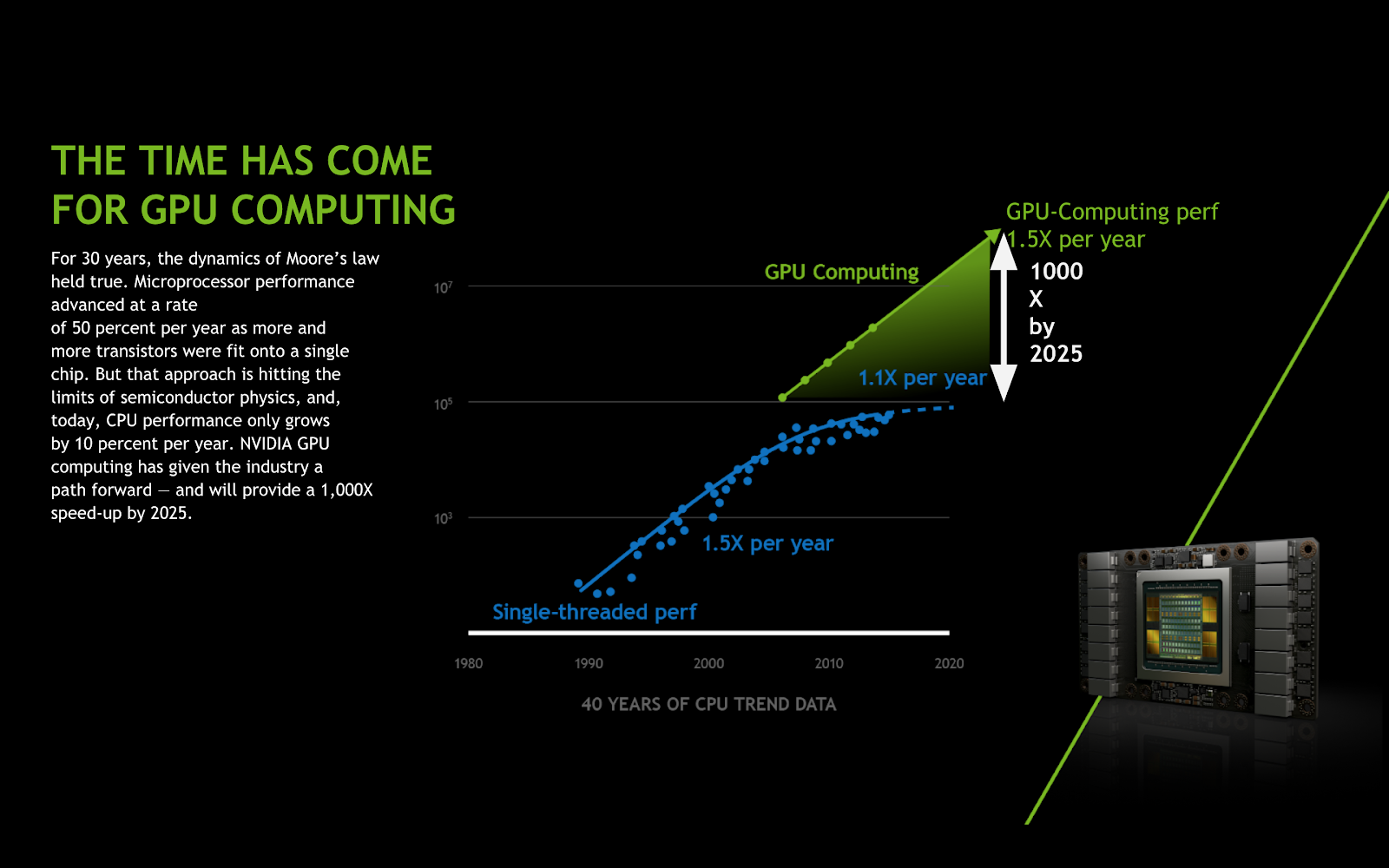

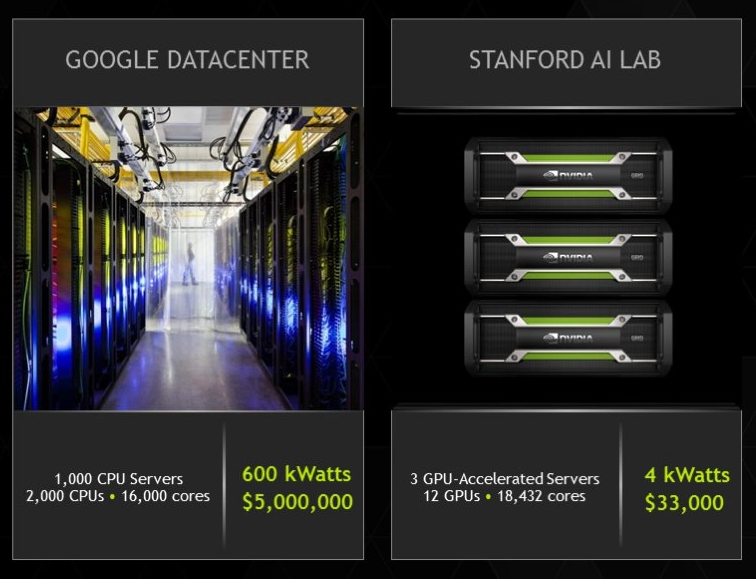

GPU-Accelerated Computing. I don't believe when someone says … | by Crypto1 | Analytics Vidhya | Medium

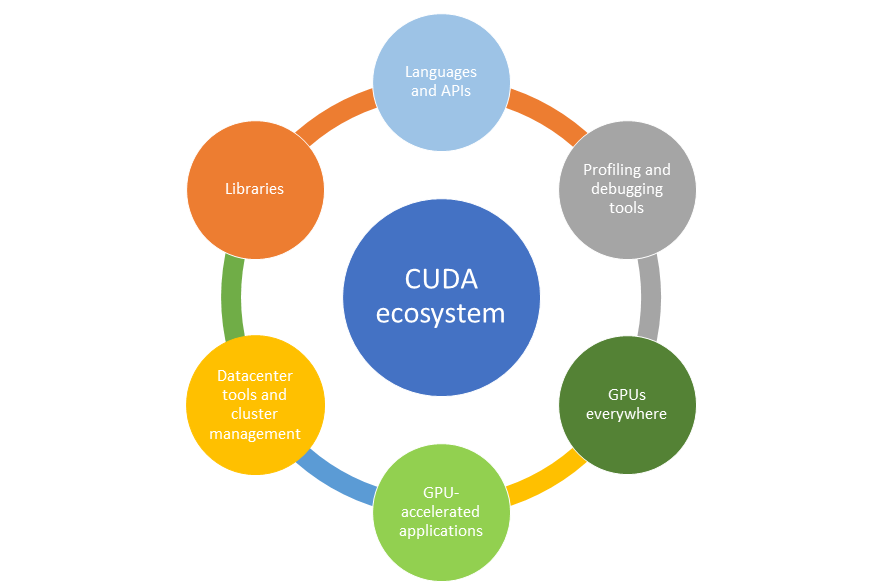

GPU-Accelerated Computing with Python | Information Technology @ UIC | University of Illinois Chicago

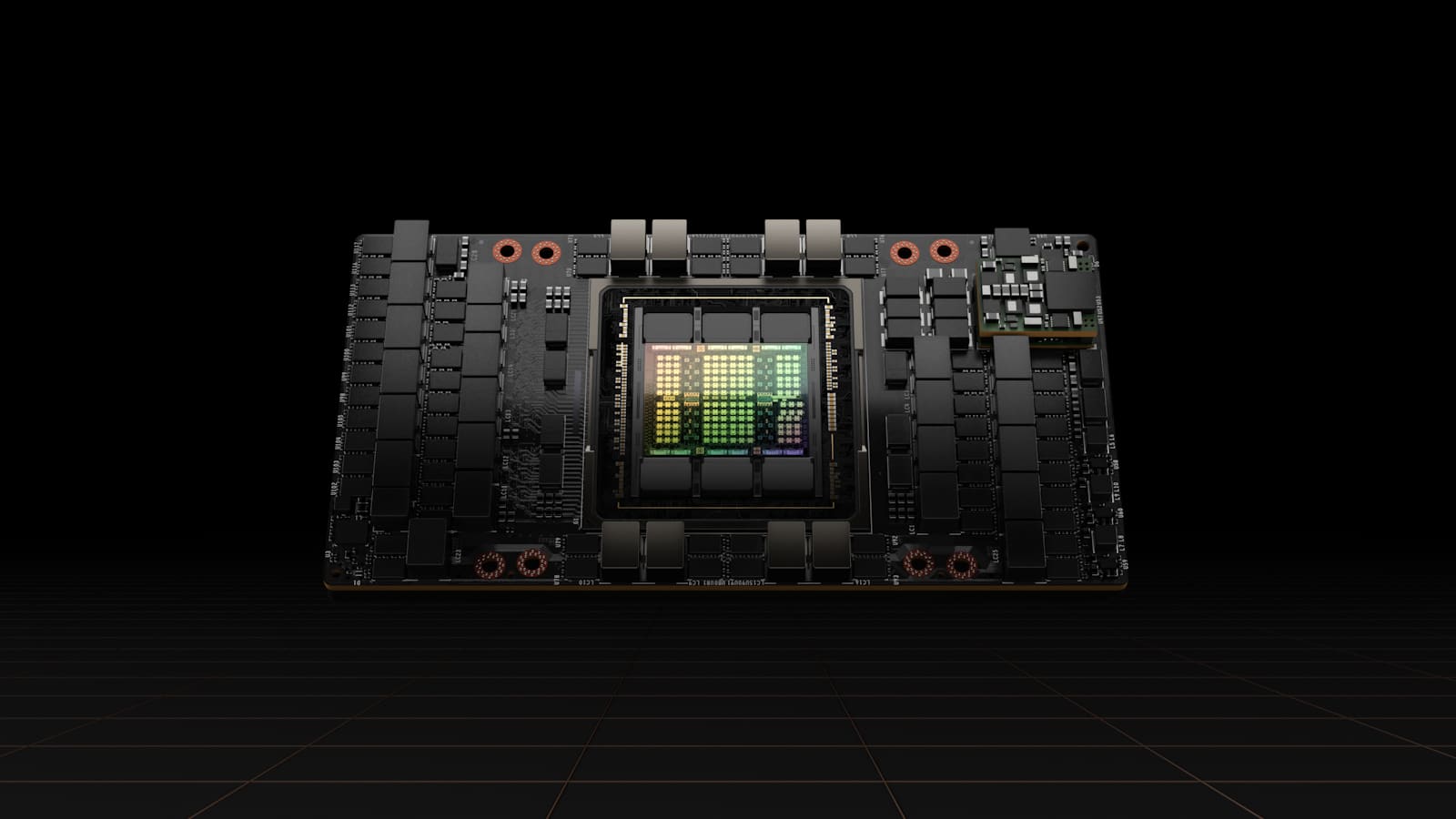

Nvidia Tesla K20 K80 M40 Graphics Card 24gb Gpu Accelerated Computing Card Ai Deep Learning Card - Flanges - AliExpress

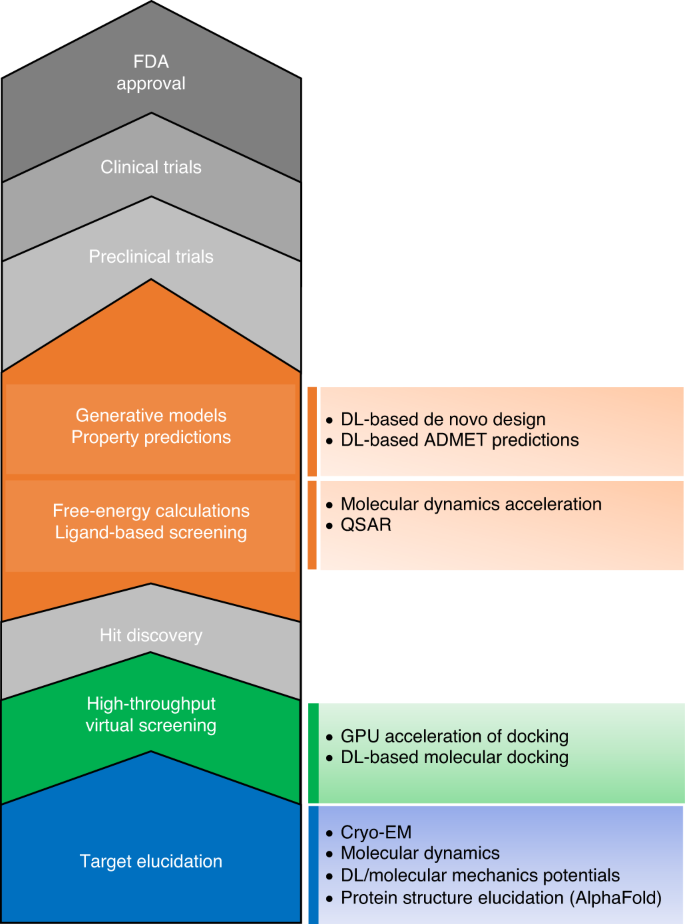

The transformational role of GPU computing and deep learning in drug discovery | Nature Machine Intelligence